How to improve a chatbot experience from reading AI-generated paragraphs to easy-to-consume interactive and visual media?

Details and more concrete visuals of this project are under NDA.

What the work is

Stevie is Complexio’s data discovery and data inquiry agent. It has been a successful product to enable executives to talk and generate reports about their operations, but was falling short on output: like any chatbot, it answered in text. When I joined the team in January 2026, I focused on the largest gap in the human experience with the product: what the agent could actually put on the screen in answer to a question.

My approach

While artifacts are not new in chatbots, there are various implementations with their pros and cons. My work started with scanning how alternative products are solving artifacts and document generation, and generated proof-of-concepts to properly evaluate what’s the difference between running a sandbox, or using a srcdoc. This technology horizon scanning enabled me to build an artifact core quickly, and spend my efforts in the nitty-gritty: working through problems like automatic repair of broken React output or crafting the system prompt to avoid outputting AI slop interfaces.

My North Star was to beat the frontier labs’ artifact generation systems, using the difference to our benefit: we don’t need to build this for the general public, but to our very specific customers. The constraint that organized the work: dashboards that don’t look like cheap AI artifacts. From the beginning I wanted Stevie’s outputs to avoid the purple, gradient-heavy, slop aesthetic the models defaulted to at the time.

I codified an anti-slop ruleset in plain language (no thick colored left borders, minimal border-radius, no cards-in-cards, no rainbow status pills, no gratuitous shadows, no gradient backgrounds) and translated it into the production system prompt with explicit allow and deny lists. The discipline has a paper trail from a personal CLAUDE.md, into the prompt, into the dashboards customers see.

What I built (production)

Artifact system. An interactive panel rendering generated React, HTML, Mermaid, and SVG in a live preview alongside the chat: preview-first, code hidden by default, downloadable as a self-contained offline HTML file. I architected the surface end-to-end: system prompt, backend detection, typed streaming event, client store and panel, sandboxed iframe, export path. This is the canonical anchor and what customers use today.

Document renderers (docx, pptx, PDF). Built after the artifact system, in production. I built these because I got tired of waiting for developers to fix it again. Customers wanted proper exports, the team had it on the backlog, the gap kept slipping, so I dropped into the rendering path and built it. Equally contribute to production frontend code, a backend endpoint, an LLM prompt, or a document-rendering library.

What is the future (next-generation artifacts)

I have a long-standing interest in dynamic UI generation; there is no reason we couldn’t expect an agent to generate a UI for the specific task at hand. While this is quite a monolith task that might get ripe only in few model generations’ time, there are already streams that I have been exploring, such as:

-

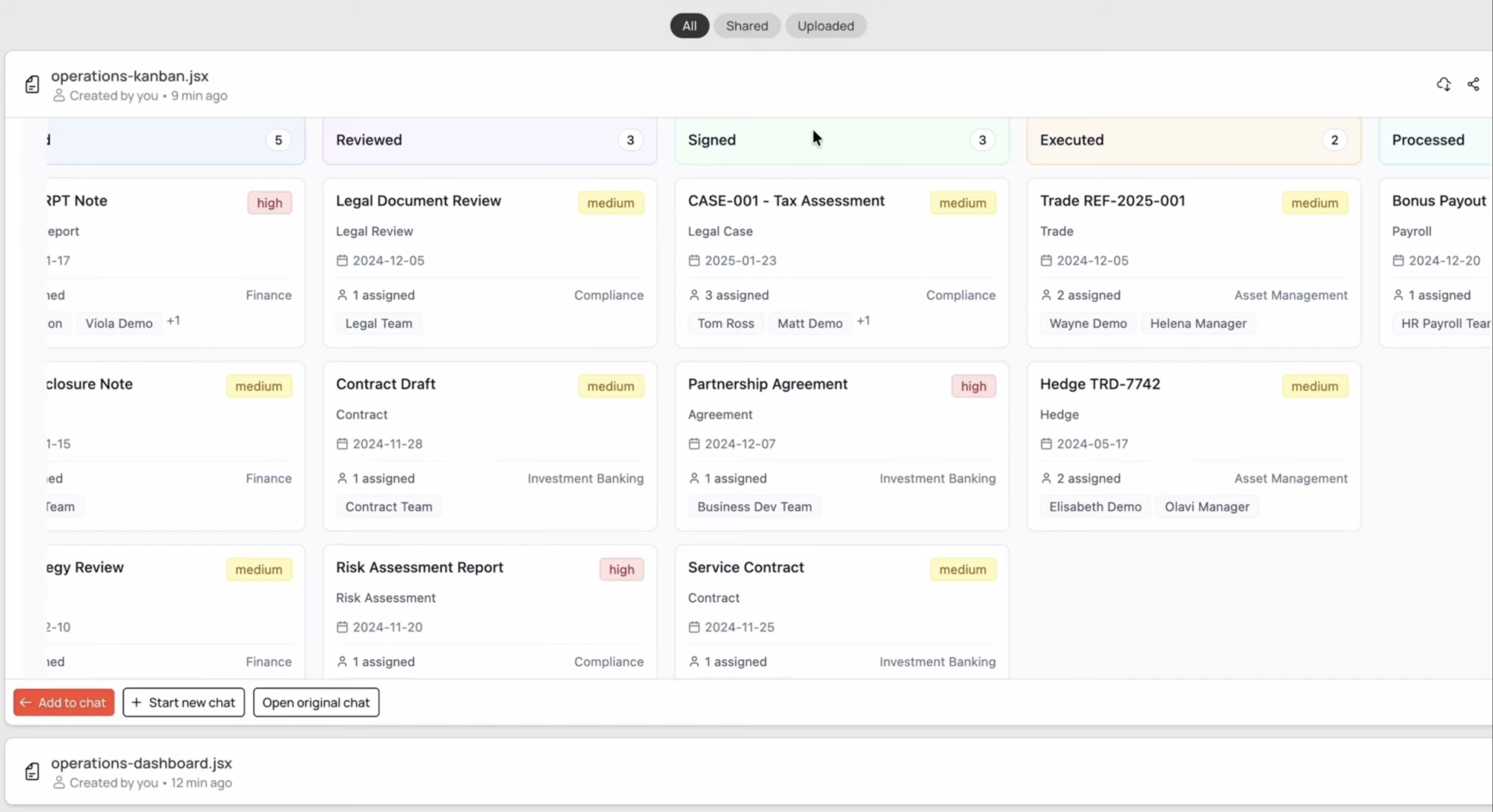

To what extent can we let an agent to invent the UI for the task? It’s a waste of tokens to expect the LLM to invent from scratch how a dashboard or a kanban board should like like as an interface. I have been experimenting with designing and developing a set of A2UI-inspired primitives that the LLM can use to compose an interface from typed blocks, and thus only output structured JSONs that describe the interface.

-

What is needed to turn static artifacts into dynamic ones? Artifact systems typically generate hard-coded data into frontend code. However, the research agents already needed to go through a line of data queries in the process of getting an answer. I have been exploring generating the frontend scaffold and the data manifest in parallel, and in this way decouple the data from the frontend.

Why this work matters

The work shows how my deep background in data science and interaction design resulted in a prototype that resulted in strategic product changes.